Why Most Engineers Fail in Nearshore Teams

A Cognitive Alignment Problem

The foundational failure of modern technology organizations is not a shortage of talent. It is a profound disconnect between how software is managed and how it is actually created. The industry operates under a persistent delusion. Managers treat software engineering as an assembly line. They assume it is predictable. They assume it is uniform. They assume it is governed by deterministic inputs and outputs. This is the Factory Fallacy. (Source: [PAPER-PLATFORM-ECONOMICS]). Software engineering is not a factory. It is a stochastic process. It is characterized by high variance. It is defined by significant uncertainty. When executives apply manufacturing principles to cognitive work, they build fragile systems. These systems are mathematically destined for delay. They are guaranteed to experience cost overruns. They inevitably lead to burnout.

As stated directly in Nearshore Platformed, "The quest for exceptional technology talent represents a defining challenge of our time." This challenge cannot be solved by simply acquiring more headcount. Adding bodies to a broken system accelerates the collapse of that system. The legacy nearshore vendor model amplifies these structural drags. It creates opaque workflows. It relies on anecdotal decisions. It depends on manual recruitment pipelines. It utilizes outdated compensation surveys. This results in hiring guesswork. It replaces planable dates with vague timelines. It turns talent acquisition into a game of roulette.

The Factory Fallacy and Topological Friction

The instinct for any manager is to maximize the output of their most expensive asset. They push engineers toward maximum utilization. This goal seems logical on the surface. It is fundamentally incorrect. It violates the absolute laws of queuing theory. Little's Law governs Work in Progress. It dictates the relationship between average completion time and throughput. Increasing Work in Progress while holding throughput constant necessarily increases lead time. When a team starts more work than it can complete, the average time to finish anything gets longer. Pushing teams to full capacity injects fragility into the system. Small deviations from estimates cascade. Queues form. Overall delivery slows down. Why Engineering Velocity Collapses.

This phenomenon is mathematically proven by Kingman's Limit. Wait times grow exponentially as utilization approaches maximum capacity. Systems pushed to their limits will experience performance collapse under load. The result is a brittle operation. Any unexpected issue causes a proportional slowdown across the entire pipeline. This is why adding engineers reduces productivity. Why Adding Engineers Reduces Productivity. The system cannot absorb the coordination overhead. The communication edges multiply. The dependency surfaces expand. The review load increases. The synchronization cost becomes unbearable.

Conway's Law dictates that the architecture of the software will mirror the communication structures of the organization. When nearshore teams are bolted onto legacy onshore structures without cognitive alignment, topological friction is generated. The Monolith Gravity pulls everything into a centralized bottleneck. Why Is The Monolith Crushing The Team. The nearshore team cannot move independently. They are tethered to the onshore core. The Rework Rate Coefficient spikes. Engineers find themselves fixing the same bug repeatedly. Why Are We Fixing The Same Bug Again. This is not a failure of individual talent. It is a failure of system design.

Sequential Effort and The Follow-the-Sun Fallacy

Software work is inherently sequential. It is probabilistic. The Factory Fallacy completely ignores this reality. Software engineering is not an additive process where tasks are simply stacked together. It is a complex network of sequential dependencies. The success of each subsequent step depends on the successful completion of the preceding one. This reality is captured by the Sequential Pipeline Reality O-Ring Invariants. The reliability of the final output is the product of the reliability of every single step in the chain. It is not the average. (Source: [PAPER-AI-REPLACEMENT]).

If a project consists of ten sequential steps, and each step has a high probability of success, the overall probability of the project succeeding without rework is still mathematically low. The cumulative effect of small probabilities of failure results in a massive risk of pipeline collapse. This multiplicative nature of risk explains why the body shop model is dangerous. Sourcing engineers based on resume keywords introduces high variance nodes into a low tolerance sequence. A single engineer with low architectural instinct can introduce technical debt that necessitates rework across the entire team. The pipeline halts. The system fails.

This brings us to The Synchronicity Physics. Time zones are a physical constraint on cognitive synchronization. The Follow-the-Sun Fallacy is a dangerous myth propagated by legacy vendors. It suggests that complex cognitive state can be handed off at the end of the day. It assumes that an offshore team can seamlessly pick up where the onshore team left off. This is false. Complex state cannot be serialized into a Jira ticket. When the night shift takes over, they lack the tacit knowledge required to execute safely. The night shift breaks the build. Why Does The Night Shift Break The Build.

The 4-Hour Rule is an absolute operational constraint. A minimum of four hours of synchronous overlap is required to maintain The Context Membrane. Without this membrane, the team fragments. Feedback loops stretch from minutes to days. Why Is The Feedback Loop So Slow. Distributed teams stay busy but deliver less. Why Distributed Teams Stay Busy But Deliver Less. They engage in motion without progress. They write code that does not integrate. Integration hell becomes the default state of the project. Why Is Integration Hell.

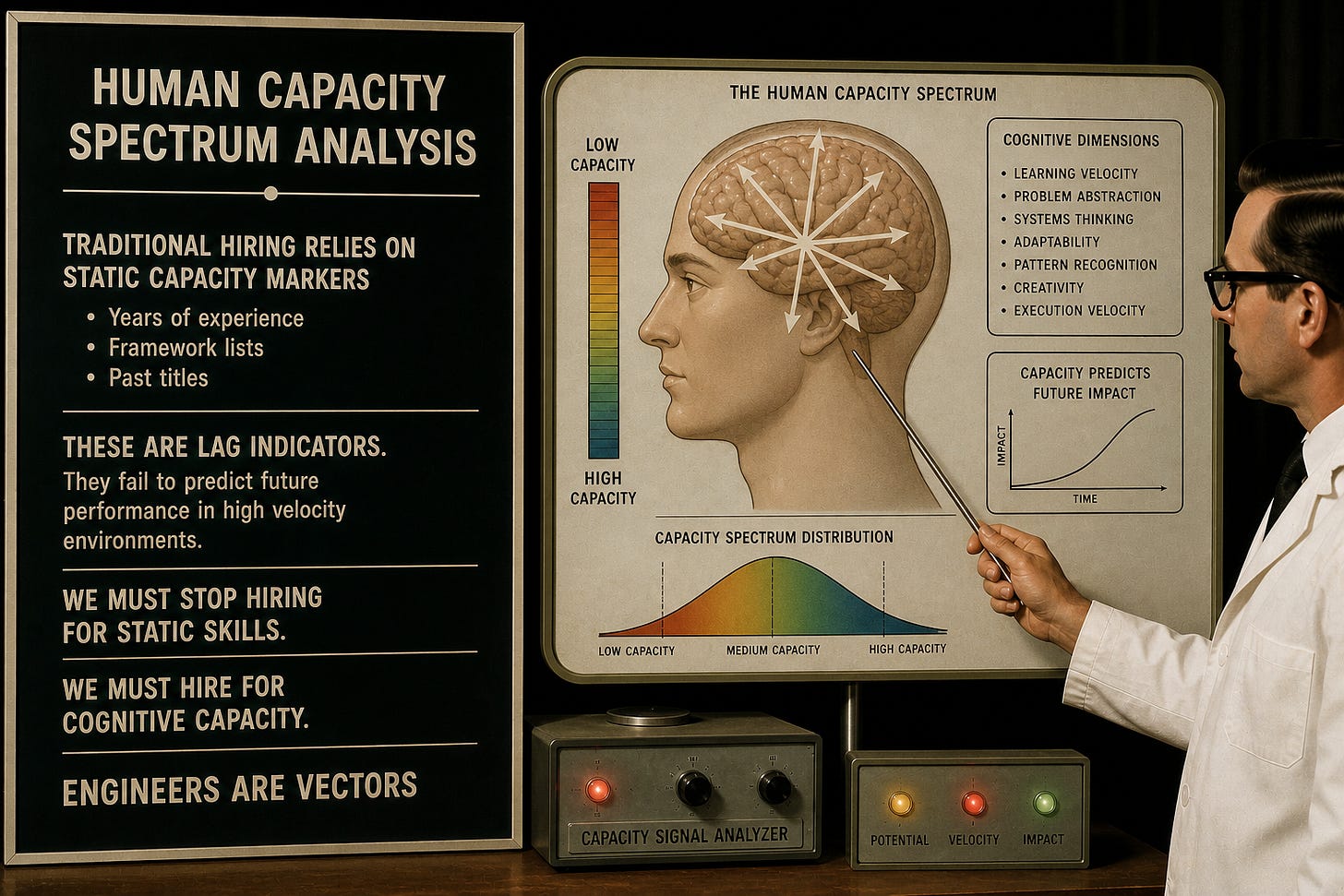

Human Capacity Spectrum Analysis

Traditional hiring relies on static capacity markers. It looks at years of experience. It looks at framework lists. It looks at past titles. These are lag indicators. They fail to predict future performance in high velocity environments. We must stop hiring for static skills. We must hire for cognitive capacity. Engineers are vectors. They possess magnitude and direction. (Source: [PAPER-HUMAN-CAPACITY]).

The core failure of modern recruitment is the resume fallacy. Resumes do not translate to results. Why Resumes Don't Translate To Results. The belief that past syntax usage predicts future system design capability is flawed. A developer's value is defined by the capacity spectrum they occupy. It is defined by the range of complexity they can handle under pressure. We utilize Human Capacity Spectrum Analysis to measure this vector. Human Capacity Spectrum Analysis. This framework maps talent across four distinct dimensions.

First is Architectural Instinct. This is the ability to visualize complex systems before code is written. It is a spatial reasoning trait. It is not a coding trait. Engineers with high architectural instinct anticipate failure modes. They identify scalability bottlenecks. They foresee integration friction intuitively. They can whiteboard architecture under pressure. Can They Whiteboard Architecture.

Second is Problem Solving Agility. This is the velocity at which an engineer traverses the solution space when variables change. High problem solving agility correlates with rapid root cause analysis. How Fast Can They Find Root Cause. It indicates the ability to pivot without cognitive stall. It is the defining characteristic of elite debugging.

Third is Learning Orientation. This is the derivative of skill acquisition. It measures how fast the knowledge graph expands. In an era where frameworks have a short half life, learning orientation is the only durable predictor of relevance. Will they survive the next framework shift. Will They Survive The Next Framework Shift. This is the Autodidact Signal. It is the velocity at which an engineer acquires and implements new structural concepts without formal instruction.

Fourth is Collaborative Mindset. This is the efficiency of information transfer between nodes. A high capacity individual with low collaborative mindset functions as a black box sink. They absorb resources but radiate little value to the network. They cannot code with others watching. Can They Code With Others Watching. They isolate themselves. They become a single point of failure.

Unlike scalar rankings, Human Capacity Spectrum Analysis assigns a multi dimensional vector. This allows for spectral matching. We match candidate vectors to project complexity vectors. The alignment score is defined by the cosine similarity of these vectors. This approach mathematically prevents the overqualified mismatch. It prevents the Peter Principle promotion. It ensures that teams are built on compatible cognitive architectures. Team Engineering Topologies.

Axiom Cortex: The Neuro Psychometric Evaluation Engine

To measure these cognitive vectors, we deploy Axiom Cortex. Axiom Cortex is strictly a Neuro Psychometric Evaluation Engine. It evaluates human talent. It is not a security tool. It is not a firewall. It is not a kill switch. It is not a continuous integration pipeline. It is a scientific instrument for talent evaluation. (Source: [PAPER-AXIOM-CORTEX]).

Axiom Cortex executes Phasic Micro Chunking. It processes interview transcripts through a Latent Trait Inference Engine. It eliminates interview hallucination. Axiom Cortex Architecture. The engine operates in strict sequential phases. Phase zero validates pre execution integrity. Phase one ingests and validates data. Phase two executes per question micro analysis. It generates an ideal answer blueprint. It applies forensic natural language processing. It scores the foundational axioms. Phase three synthesizes the latent traits. Phase four assembles the final report.

We evaluate deep technical domains through this rigorous cognitive lens. We assess system design capabilities. system-design Assessment. We evaluate microservices architecture comprehension. microservices Assessment. We measure grpc implementation knowledge. grpc Assessment. We analyze rest api design principles. rest-api-design Assessment. We test event sourcing patterns. event-sourcing Assessment. The engine does not look for keyword matches. It looks for conceptual fidelity. It looks for the underlying reasoning process.

The engine detects the Autodidact Signal through the authenticity incident. It asks candidates what they do not know. True autodidacts respond with a precise map of their own ignorance. They state clear boundaries around their knowledge. They do not bluff. The system rewards this authenticity. It penalizes hedging language. It tracks the ratio of first person pronouns versus collective pronouns. It measures ownership.

Axiom Cortex evaluates across the entire technology stack. It assesses cloud infrastructure. aws Assessment. azure Assessment. google-cloud Assessment. It evaluates containerization and orchestration. docker Assessment. kubernetes Assessment. It measures infrastructure as code proficiency. terraform Assessment. cloudformation Assessment. It tests continuous integration and deployment pipelines. ci-cd Assessment. github-actions Assessment. gitlab-ci Assessment. It analyzes observability and monitoring skills. prometheus Assessment. grafana Assessment.

The engine extends into data engineering. data-engineering Assessment. It evaluates extract transform load processes. etl-elt Assessment. It measures distributed computing knowledge. apache-spark Assessment. It assesses data transformation tools. dbt Assessment. It tests cloud data warehousing. snowflake Assessment. data-warehousing Assessment. It evaluates data governance principles. data-governance Assessment. It analyzes machine learning operations. mlops Assessment. It measures large language model integration skills. llms Assessment.

The system evaluates frontend and backend frameworks with equal precision. It assesses react typescript proficiency. react-typescript Assessment. It measures angular architecture knowledge. angular Assessment. It tests vue js implementation. vue-js Assessment. It evaluates next js server side rendering. next-js Assessment. It analyzes node js event loop comprehension. node-js Assessment. It measures golang concurrency patterns. golang Assessment. It assesses java virtual machine tuning. java Assessment. It evaluates python data structures. python Assessment. It tests c sharp enterprise patterns. c-sharp Assessment. It measures rust memory safety concepts. rust Assessment.

The engine evaluates database architecture. It assesses postgresql optimization. postgresql Assessment. It measures mysql replication. mysql Assessment. It tests mongodb document design. mongodb Assessment. It evaluates cassandra distributed storage. cassandra Assessment. It analyzes redis caching strategies. redis Assessment. It measures elasticsearch indexing. elasticsearch Assessment. It assesses vector database integration. pinecone Assessment. milvus Assessment.

Mathematical Validation and Bias Mitigation

Axiom Cortex is built on a foundation of rigorous mathematical validation. It employs a Nonparametric Latent Measurement Layer. It models the relationship between evidence vectors and latent traits using isotonic regression. It maximizes the posterior distribution to ensure accurate trait estimation. It utilizes Optimal Transport Alignment for Discourse. It computes Wasserstein distances between candidate discourse embeddings and ideal blueprint embeddings. It calculates trait specific deltas to measure conceptual closeness.

The system applies Information Geometry for Calibration. It measures and penalizes miscalibration by computing Expected Calibration Error and Jeffreys Divergence. It constructs Network Psychometrics. It identifies key skills discussed in the evidence and estimates their partial correlations to build skill graphs. It executes a Bayesian network to model the hierarchical relationships between evidence, sub metrics, latent traits, and the final score. All calculations incorporate calibrated uncertainty.

Bias mitigation is a non negotiable system critical directive. The Cortez Calibration Layer acts as a sophisticated filter. It applies algorithmic adjustments to raw scores based on detected interference patterns and cultural communication styles. It ensures we evaluate the pure technical and logical signal. It ignores linguistic noise. It utilizes Differential Item Functioning to ensure fairness across diverse populations. It calculates a Composite Integrity score that is explicitly aware of second language artifacts. It normalizes language variance by scoring conceptual fidelity over grammatical perfection.

Security Architecture and Incident Response Symmetry

Axiom Cortex evaluates the human. The operational environment must secure the human. Security is not a behavioral expectation. It is a cryptographic absolute. (Source: [PAPER-PERF-FRAMEWORK]). We enforce Incident Response Symmetry. The nearshore team must operate under the exact same security constraints as the onshore core. There can be no deviation. There can be no exceptions. The blast radius of shadow IT is too massive to ignore.

This requires a Zero Trust Architecture. Access is governed by strict Single Sign On protocols. Identity Providers manage every single authentication event. Cross Domain Identity Management automates the provisioning and deprovisioning of access rights. Virtual Desktop Infrastructure ensures that source code never rests on local hardware. Mobile Device Management locks down the physical endpoint