Why Agentic AI Workflows Need Boring Old Software Fundamentals

Thesis on need for ubiquitous_language.md doc, clean code as machine readable infrastructure, and the right mental shape (Engineer) for the AI tooling diaspora

Look, every time a new tool drops, the same story gets sold, and the story goes like, just point the smart machine at your code and watch it ship features while you sleep. IMO the story is half true, because agents really are good, but the other half of the story gets quietly skipped. Agents copy whatever they see in your repo, so a clean codebase makes them sharper, and a messy codebase makes them messier, just way faster. So there ya have it, the tool is a mirror, not a magic wand, makes ya think, no?

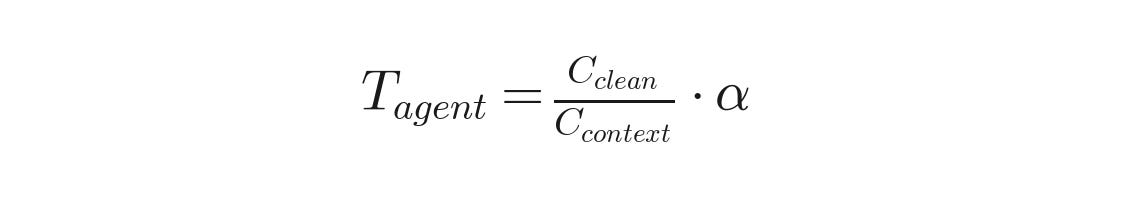

The whole argument boils down to a simple equation, where the agent throughput you actually get is bounded by how clean the code is, divided by how much context the agent has to load just to understand the mess, multiplied by some agent capability factor.

Which is the whole reason a tiny little file called ubiquitous_language.md, sitting right there in the root of your repo, might be one of the most useful files a team can write in 2026, no joke.

The Smart New Hire Who Has Never Met Your Team

The popular pitch around AI coding tools paints the agent like a universal cleaner, where you pour it on any problem, and the problem just goes away by itself. The real picture looks more like a super sharp new hire, who has read every public repo on earth, but has never sat in your standups, never argued in your design reviews, and has no clue that when your domain people say order they mean something a little different from what your billing service calls an order.

Now the new hire types fast, real fast, faster than any human you ever worked with, but speed without context is just confident wrongness arriving sooner. Agents do not throw red errors when they misread your domain, they just produce clean looking code, well formatted code, code that compiles, and the code quietly encodes a wrong picture of how your business actually works.

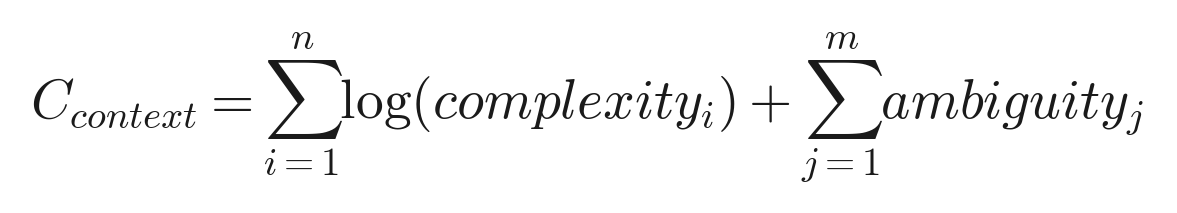

The cost of context loading can be modeled roughly like the sum of complexity logs across files the agent reads, plus the ambiguity penalties the agent has to resolve, where ambiguity comes from naming drift, dead code, and unclear boundaries.

Lemme show you what the confident wrongness actually looks like in practice. Say you ask an agent to add a discount feature to your billing system, and the agent has no glossary to read, so the agent guesses based on what it sees in the codebase. Here is the kind of code the agent will cheerfully produce.

// agent generated, no glossary loaded

function applyDiscountToOrder(orderId: string, percent: number) {

const order = db.orders.find(orderId);

order.total = order.total * (1 - percent / 100);

order.save();

}

Looks fine, right? Compiles, runs, ships. Except the business meant Subscription, not Order, since the discount only applies to recurring billing, and the codebase already has an Order entity that means a one time purchase. So the agent just wrote a function that silently corrupts one time purchases, while leaving the actual subscription flow untouched, and nothing in the type system pushed back.

Here is the same feature, written by the same agent, with a glossary file loaded in context up front.

// agent generated, ubiquitous_language.md loaded

function applyDiscountToSubscription(subscriptionId: string, percent: number) {

const subscription = db.subscriptions.find(subscriptionId);

subscription.recurringAmount = subscription.recurringAmount * (1 - percent / 100);

subscription.save();

}

Same agent, same model, same prompt, totally different outcome, just because the words in the repo were pinned down on paper. So the fix is not to fire the agent, the fix is to give the agent what we should have been giving every junior engineer all along, which is a codebase that explains itself out loud.

Day One Behavior

Human New Hire

Agent New Hire

Reads Slack history

Yes, over coffee with the team

No, never sees a single message

Picks up tribal knowledge

Through standups and pairing

Only through written files

Asks clarifying questions

Yes, when confused on naming

Sometimes, but invents names if rushed

Speed of first PR

Days or weeks

Minutes

Cost of getting domain wrong

Caught in code review

Compounds across many files fast

The File You Wish You Had Written Three Years Ago

Eric Evans wrote about ubiquitous language back in his Domain Driven Design book, and the idea sounds almost too simple to bother with, but stay with me for a sec. The words your business people use, the words your product manager uses, and the words in your code should all be the exact same words, with the exact same meanings, all the way down to the variable names.

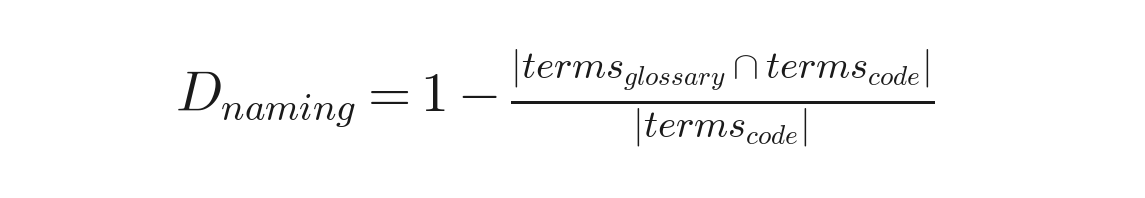

You can actually measure how much your repo is drifting from the glossary, since naming drift is just the share of code terms that do not appear anywhere in your written vocabulary.

A repo with low drift gives the agent a tight signal on which words to prefer, and a repo with high drift sends the agent shopping for synonyms in the wild.

For a human team, lining up the language gives you a nice productivity boost, but for an agent driven team, the same alignment is closer to a survival need, not a nice to have. An agent reading your repo has no Slack history, no whiteboard memory, no folklore from the engineer who left back in 2023, and the agent only sees what got written down.

A ubiquitous_language.md file, dropped in the root of your repo, fixes the whole mess with embarrassingly little effort, no joke. Here is what an actual entry in the file looks like, copy paste ready, so you can see the shape on disk.

# Ubiquitous Language

## Subscription

A recurring agreement to pay for a plan on a fixed schedule, like monthly or yearly.

- Has a recurringAmount, a renewalDate, and a cancellationPolicy.

- NOT an Order, since orders are one time purchases.

- NOT an Entitlement, since entitlement is the runtime permission flag

that gets created from a Subscription.

## Order

A single one time purchase event, paid up front, no recurrence.

- Has a totalAmount, a purchaseDate, and a refundWindow.

- NOT a Subscription, since orders never recur.

- NOT an Invoice, since the invoice is the document, while the order is the event.

## Customer

A real person or company who has paid us money at least once.

- NOT a User, since most users sign up without ever paying.

- NOT an Account, since one customer can hold many accounts.

Notice the bullets explaining what each term is not, since the disambiguation is honestly more useful than the definition itself. The agent reads the glossary the way a thoughtful new hire would, as a map before walking into the territory, and then the agent uses your words, and only your words, when it writes new code.

Term

Plain English Meaning

Not To Be Confused With

Customer

A real person or company who paid us money

User, Account

User

Anyone with login credentials, paid or not

Customer, Member

Account

The billing container holding subscriptions

Workspace, Tenant

Subscription

A recurring payment agreement

Order, Entitlement

Order

A single one time purchase event

Subscription, Invoice

Clean Code Stopped Being a Taste Thing

For years, clean code was kind of a religion war, where one camp pushed hard for readable code, and the other camp said clever dense code was fine, since the people who wrote it could maintain it. Both camps could point at shipped products and feel good about themselves, so the argument was real, but the stakes were honestly pretty small.

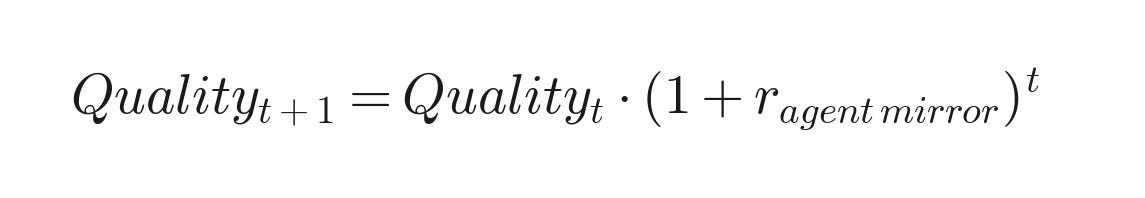

Agentic workflows flip the stakes hard, because clean code stopped being a taste thing, and started being a throughput thing, no exaggeration. The compounding effect is real, since agents mirror the code they see, so codebase quality at time t plus one is just codebase quality at time t multiplied by a growth factor that depends on how strongly the agent mirrors the existing patterns.

If r is positive, your codebase improves over time, and if r is negative, the rot accelerates with every commit, no in between.

Lemme show you what messy code actually does to an agent in practice, since the cost is not abstract. Here is a function from a real shaped codebase, the kind you have probably seen a hundred times, where one function is doing way too much.

// the kind of function agents struggle with

function processUser(u: any, t: string, opts?: any): any {

if (t === 'new') {

if (u.em && u.em.includes('@')) {

const r = db.q('SELECT * FROM accs WHERE em = ?', [u.em]);

if (r) { return { s: 0, m: 'dup' }; }

// ... 40 more lines of mixed concerns

} else if (t === 'upd') {

// ... 30 more lines

}

}

}

If you ask an agent to fix a bug in the function, the agent has to load the whole thing into context, untangle what t means versus opts versus u, and try to guess what s and m on the return are supposed to represent. The agent will produce something, sure, but the agent will probably introduce a new bug somewhere else, since the function does not signal its own boundaries clearly.

Here is the same logic, written the boring way, with names doing the work.

// the kind of function agents fix correctly on the first try

type SignupResult = { status: 'ok' | 'duplicate'; message: string };

function signUpNewCustomer(email: string): SignupResult {

if (!isValidEmail(email)) {

return { status: 'duplicate', message: 'invalid email' };

}

const existing = findCustomerByEmail(email);

if (existing) {

return { status: 'duplicate', message: 'email already registered' };

}

return createCustomer(email);

}

Same logic, same business rules, but now the agent can read the function in three seconds, knows exactly what changes affect what, and produces fixes that stay inside the right boundaries.

Fundamental

Cost of Skipping, Pre Agent Era

Cost of Skipping, Agent Era

Clear function names

Slower onboarding for humans

Agent invents new names, drifts vocabulary

Small focused modules

Harder code review for the team

Agent burns tokens loading whole module

Tests that fail loudly

Bugs caught later in QA

Agent ships breaking changes confidently

Predictable folder layout

Annoying for new hires

Agent guesses wrong, creates duplicate files

Comments explaining the why

Nice to have

Agent removes the why during refactor

Greenfield, And The Habits Worth Forming Early

If you are kicking off a brand new project today, you got an advantage previous generations of engineers never had, since you can build the codebase like an agent will be reading the repo on day one, because honestly an agent will be. So a few small habits become worth locking in early, before the cruft has time to settle in.

Start with the glossary, IMO, since writing ubiquitous_language.md before the first model file forces the team to argue about the words now, instead of finding the disagreements buried in the code six months from now. Tests are the next big lever, even minimal ones, since a test pins behavior down on paper. Here is what a useful first test looks like, the kind that actually helps an agent know what not to break during refactor.

// a tiny test that pays for itself ten times over

import { signUpNewCustomer } from './customer';

test('a new email creates a customer with status ok', () => {

const result = signUpNewCustomer('jane@example.com');

expect(result.status).toBe('ok');

});

test('a duplicate email returns duplicate status, not ok', () => {

signUpNewCustomer('jane@example.com');

const result = signUpNewCustomer('jane@example.com');

expect(result.status).toBe('duplicate');

});

test('an invalid email returns duplicate with invalid email message', () => {

const result = signUpNewCustomer('not-an-email');

expect(result.message).toBe('invalid email');

});

Three tests, maybe ten minutes of work, but now any agent refactoring the function has a hard signal for what the function is supposed to do, and the agent will not silently change the contract during a cleanup pass.

Order

File

What The File Does For The Agent

1

ubiquitous_language.md

Locks domain vocabulary so agents stop drifting names

2

README.md

Explains why the project exists, plus how to run it

3

CONTRIBUTING.md

Sets coding conventions and PR norms

4

First failing test

Pins down behavior the agent must preserve

5

ARCHITECTURE.md

Walks through the high level system flow

Keep the directory layout flat, predictable, and named after domain ideas, not technical layers, when you can swing the choice, so subscriptions and billing as folder names, instead of controllers and services. None of the habits are new, and your tech lead from 2018 was already telling you the same things, but the habits stopped being just good practice, and started being load bearing infrastructure for working with non human teammates.

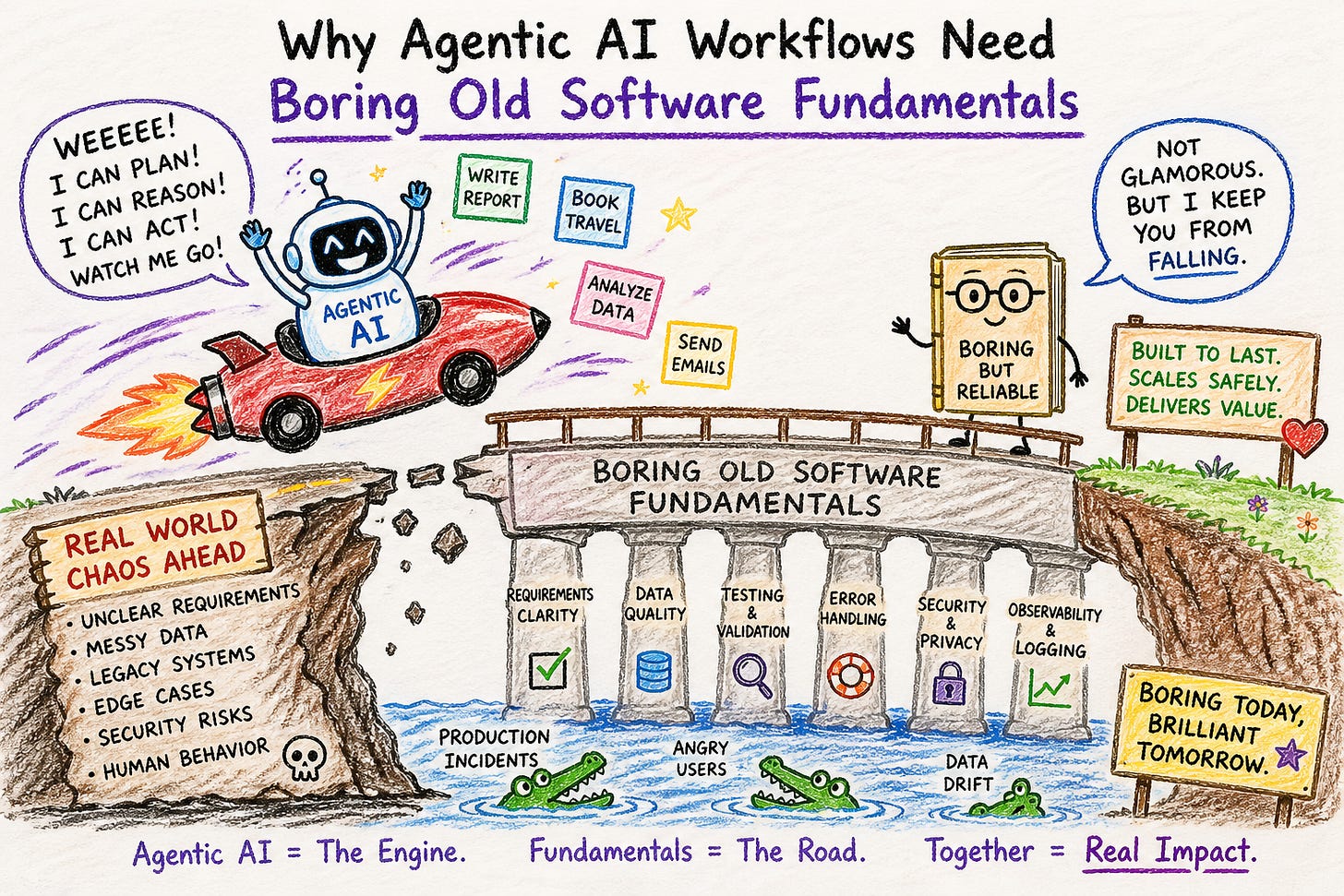

The Right Mental Shape For The AI Tooling Diaspora

Which brings us to the harder question, the one most teams are still ducking, which is, what is the right mental shape for an engineer in the new era? The phrase AI tooling diaspora actually fits the situation pretty well, since the tools have multiplied, and scattered, and now there are agents in your terminal, agents in your IDE, agents in your browser, agents driving your spreadsheets, and agents talking to other agents through orchestration frameworks.

The value an engineer actually adds in the new era can be sketched out roughly like the product of taste and reading skill, plus the leverage from writing good specs, minus the cost of losing ownership feel.

The first two terms grow with practice, while the last term grows quietly when you stop reading what the agent ships, so the trick is keeping all three in balance.

Mental Shape

How The Engineer Works

Failure Mode

Agent As Faster Keyboard

Uses agent for autocomplete and boilerplate

Productivity ceiling stays low, agent underused

Agent As Junior Engineer

Delegates implementation, reviews diffs

Engineer slowly loses feel for the codebase

Agent As Editorial Staff

Engineer is editor in chief, agents are writers

Takes real skill, no shortcut available

The wrong mental model, and the most popular one IMO, treats the agent like a faster keyboard, where engineers use agents to autocomplete, to spit out boilerplate, and to write tests they were going to write anyway. The same engineers get a small productivity bump, and then the engineers stop right there, since the relationship to the code never really changes, where the human is still the author, and the agent is just a stenographer with a fast typing speed.

The slightly better mental model treats the agent like a junior engineer, where the human delegates, reviews, and redirects, and writes specs instead of writing code by hand. The same humans get more done, but the model has a quiet failure mode, where the engineer slowly loses the feel of the codebase, and ends up managing code the engineer no longer really understands, which works fine until something breaks in a way the agent cannot fix on its own.

The mental shape that actually holds up over time looks more like an editor in chief, where the engineer takes responsibility for the codebase as a whole artifact, including the vocabulary, the structure, the taste, and the standards, and uses agents like a big, capable, sometimes wrong staff of writers. The editor in chief reads everything that ships, rejects work that does not fit the voice of the publication, and invests in style guides, where ubiquitous_language.md is one such style guide, since style guides are how you scale your judgment across a team you cannot personally watch every minute.

The shape takes real skill to grow into, no shortcut available, since you cannot edit code you cannot read, and you cannot enforce taste you cannot articulate, and you cannot catch a wrong answer if you do not know what a right answer looks like in the first place. The engineers who actually thrive in the AI tooling diaspora will not be the ones who prompt the cleverest, IMO, but the ones who understand, slowly and deeply, what software is supposed to look like, and who treat their codebases like living documents, where every reader, human or machine, can pick up the file and understand what the file is trying to say.

Not so fast...there, Chuck!

So there ya have it, the fundamentals were never really for us alone, since we were always writing for the next reader, and the next reader, more and more these days, is a machine, and machines, turns out, have very strong opinions about clean code, makes ya think, no?