I Got Tired of Nearshore Vendor Guessing, So We Built a Replacement.

By Lonnie McRorey, CEO & Co-Founder, TeamStation AI

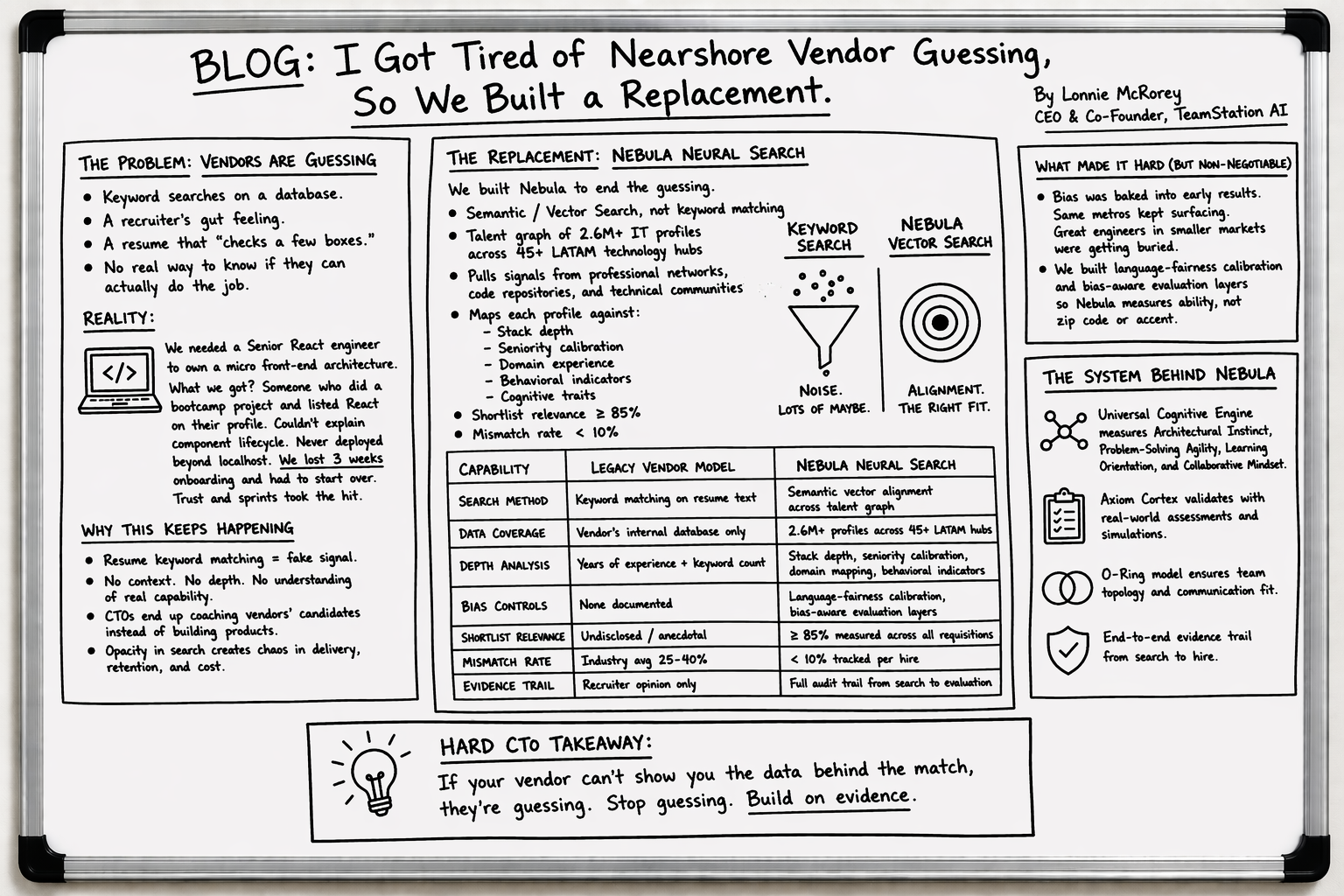

Over the past 25 years I have sat on both sides of the nearshore staffing table, as the client getting burned and as the operator trying to fix what was broken. And the one thing that kept showing up in every bad engagement I witnessed or inherited was this: the vendor had no real way to find the right engineer for the job. They were guessing. Keyword searches on a database, a recruiter’s gut feeling and a resume that checked a few boxes. That was the whole system.

I remember one engagement where we needed a senior React engineer who could own a micro front-end architecture for a product serving millions of users. What showed up was someone who had touched React in a bootcamp project and listed it on their profile. That person could not walk through a component lifecycle, could not explain state management and had never deployed anything beyond localhost. We lost three weeks onboarding them before we had to start the search over. The business paid for that in delayed sprints and a client relationship that got real shaky real fast.

That experience, multiplied across dozens of engagements with different vendors over the years, is what led me to build Nebula Neural Search inside TeamStation AI. As Dan Diachenko and I wrote in our book Platforming the Nearshore IT Staff Augmentation Industry, the old ways of finding and engaging tech talent are broken at the root, and no amount of recruiter hustle fixes a system that was never designed for precision in the first place.

Why Resume Keyword Matching Keeps Wasting Everyone’s Time

Here is what most people in this industry do not want to say out loud. The majority of nearshore staffing platforms still match candidates to roles using keyword searches. The client says “React, Node, PostgreSQL” and the system returns every profile in the database that has those three words somewhere on the page. There is no context. There is no depth. There is no understanding of whether that person used PostgreSQL to build a production data layer or just followed a tutorial on YouTube.

I have talked with CTOs across the United States who tell me the same story. They get a shortlist from their vendor, the resumes look decent, and then the technical screen reveals that the skill depth is not even close to what was represented. Engineering leads end up spending more time coaching, teaching and guiding the vendor’s candidates than building the product, which delays critical deliverables and frays the trust between teams.

The model needed to change from the ground up. I wrote about every failure mode I encountered over two decades in our CTO Playbook, and the pattern is always the same: opacity at the search layer creates chaos downstream in delivery, retention and cost.

What Nebula Does That Nobody Else Was Willing To Build

Nebula Neural Search is a semantic alignment engine that we built on top of a talent graph covering over 2.6 million IT profiles across more than 45 technology hubs in Latin America. We pull data from professional networks, code repositories and technical communities, and the system maps each profile against stack depth, seniority calibration, domain experience and behavioral indicators that go way beyond what a resume can tell you. You can see how Nebula fits into our full integrated services stack where sourcing, vetting, devices, payroll and compliance all run under one SLA.

The difference between Nebula and a keyword search is the difference between asking “does this person have the word React on their profile” and asking “can this person actually own a front-end architecture at the level my team needs right now.” Nebula answers the second question. That is why our shortlist relevance sits above 85 percent and our mismatch rate has dropped below 10 percent. Those numbers come from tracking every single requisition through the platform and measuring what actually happened after the hire.

In the book we describe this as moving from Boolean keyword matching to operating in vector space, where we infer latent traits rather than scanning for surface-level labels. Our System Doctrine lays out the science behind this, what we call the Universal Cognitive Engine model, which measures Architectural Instinct, Problem-Solving Agility, Learning Orientation and Collaborative Mindset across every candidate Nebula surfaces.

Legacy Vendor Model vs. Nebula Neural Search

Capability

Legacy Vendor Model

Nebula Neural Search

Search Method

Keyword matching on resume text

Semantic vector alignment across talent graph

Data Coverage

Vendor's internal database only

2.6M+ profiles across 45+ LATAM hubs

Depth Analysis

Years of experience + keyword count

Stack depth, seniority calibration, domain mapping, behavioral indicators

Bias Controls

None documented

Language-fairness calibration, bias-aware evaluation layers

Shortlist Relevance

Undisclosed / anecdotal

≥85% measured across all requisitions

Mismatch Rate

Industry avg 25-40%

<10% tracked per hire

Evidence Trail

Recruiter opinion only

Full audit trail from search to evaluation

The Bias Problem We Refused To Ship Around

The hardest part of building Nebula was not the data or the algorithms. It was the bias. Early versions of the engine kept surfacing the same profiles from the same major metros over and over again while qualified engineers in smaller markets across Colombia, Argentina and Mexico got buried in the results. We saw it happening, and we knew that if we shipped the product like that we would just be automating the same inequities that recruiters had been practicing manually for decades.

We spent months building language-fairness calibration and bias-aware evaluation layers into the system. It delayed our roadmap, it cost us resources and it tested the patience of everyone on the team. But shipping a search engine that only worked for candidates from Mexico City and Buenos Aires while ignoring talent in Guadalajara, Medellin and Córdoba was not something we were willing to do. The LATAM talent economics are clear, the depth of engineering capacity across these markets is real, and a system that cannot see it is a system that is failing both the client and the engineer. If we were going to talk about transparency as a company, the technology had to reflect that commitment from the first line of code.

Our book dedicates an entire section to responsible AI in practice, specifically bias mitigation, transparency through Explainable AI, and the human oversight layer that sits on top of every automated decision Nebula makes. We published the underlying science in two peer-reviewed papers on SSRN because we believe that if your methodology cannot survive scrutiny, it should not be touching anyone’s career.

Team Topologies and the O-Ring Problem: Why Finding Talent Is Not Enough

Most people in this industry treat hiring as a standalone transaction. Find a person, place them on a team, move on. But our Engineering Doctrine proves why that thinking fails. We model engineering teams as a Sequential Probability Network, and the math is unforgiving. Under the O-Ring Invariant, each new unit of effort adds more value only when the rest of the chain is already engaged. Failure at an upstream node renders downstream brilliance mathematically useless.

What that means in plain language is this: if Nebula finds you a world-class front-end engineer but your integration layer is held together by a warm body who cannot own the architecture, the whole pipeline breaks. A warm body is what our doctrine calls a Net Negative Producer, someone who consumes more value in review time than they produce in code. Nebula was built to prevent that by measuring not just individual skill depth but how a candidate fits the topology of the team they are joining.

Engineering Doctrine: Team Topology Science

Doctrine Concept

What It Means

Why It Matters for Hiring

O-Ring Invariant

Each node in the chain adds value only when the rest is engaged

One weak hire breaks the entire delivery pipeline

Sequential Probability Network

Teams function as probability gates, not interchangeable seats

Nebula evaluates team topology fit, not just individual skill

Net Negative Producer

A warm body consuming more review time than code output

Axiom Cortex filters these before they reach your sprint

Metacognitive Conviction Index

Measures if confidence matches actual knowledge

Catches Dunning-Kruger cases before 6 months of cleanup

Universal Cognitive Engine

Infers Architectural Instinct, Problem-Solving Agility, Learning Orientation, Collaborative Mindset

Replaces Boolean keyword matching with vector-space evaluation

This is also why we paired Nebula with Axiom Cortex, our cognitive AI engine that runs evidence-based technical evaluations using BARS methodology. BARS ties every score to an observable behavior, not to a feeling or an impression. But Axiom Cortex goes further than scoring. It measures what our doctrine calls the Metacognitive Conviction Index, which answers the question every CTO should be asking: does this candidate’s confidence actually match their knowledge, or are we looking at a Dunning-Kruger case that will cost us six months of cleanup?

Nebula surfaces the shortlist, Axiom Cortex validates the talent with documented proof, and the client sees the full trail before making a single decision. Together they replaced the black box. And replacing the black box has been my mission since the first time a vendor told me to just trust them and it blew up in everyone’s face.

What You Should Be Asking Your Vendor Right Now

So if you are a CTO building a distributed engineering team or a VP of Engineering who keeps getting recycled profiles from your current vendor, here is what I would ask on the next call: Can you show me exactly how your talent search works? What data does it use? How does it handle bias? And what are your actual match numbers, not estimates, not projections, but real outcomes from real requisitions? While you are at it, ask them to show you a real TCO model because most vendors cannot.

If the answer is vague or they change the subject to talk about their “culture” or their “methodology” without showing you a single number, that tells you everything. Move on.

Platform Performance: Industry Benchmark vs. TeamStation AI

KPI

Industry Benchmark

TeamStation AI

Time-to-Offer

30-45 days

≈9 days

Shortlist Relevance

50-65%

≥85%

Candidate Mismatch

25-40%

≤10%

Day-1 Tool Readiness

Variable / undisclosed

≥95%

MDM Enrollment

Not offered

≥99% within 24h

Audit-Ready Compliance

Varies by vendor

100%

Bottom Line

Nebula Neural Search exists because the nearshore IT staffing industry treated talent discovery as something clients should take on faith for too long. We built it to produce an evidence trail at every step, from search to shortlist to evaluation to team topology fit. If you want to see the science behind it, our peer-reviewed research is public, our case studies show what happens when the evidence trail replaces the black box, and the book lays out the full thesis for why this industry needed to be platformed from the ground up. The vendors who cannot do that are going to have a hard time explaining why, and the CTOs who keep accepting it are leaving money and time on the table that they are never getting back.

#NearshoreIT #TeamStationAI #NebulaNeuralSearch #LATAMTalent #SoftwareEngineering #TalentAlignment #CTOPlaybook #HiringTransparency #NearshoreOutsourcing #TeamTopologies #AxiomCortex

Resources & Deep Dives

Resource

URL

CTO Playbook

Engineering Doctrine

Research Hub

Case Studies

cto.teamstation.dev/case-studies

TCO Model

cto.teamstation.dev/playbook/tco-model

LATAM Talent Economics

cto.teamstation.dev/playbook/latam-economics

Axiom Cortex (Bias-Free Hiring)

cto.teamstation.dev/playbook/bias-free-technical-hiring-axiom-cortex

Book on Amazon

Platforming the Nearshore IT Staff Augmentation Industry

Google Scholar

scholar.google.com/citations?user=aNol-ycAAAAJ

Podcast

TeamStation Podcast on Spotify

About the Author: Lonnie McRorey is CEO & Co-Founder of TeamStation AI, the Nearshore IT Co-Pilot platform. Co-author of Platforming the Nearshore IT Staff Augmentation Industry. Published in Forbes, Entrepreneur Magazine and peer-reviewed on Google Scholar. Listen to the TeamStation Podcast for the full platform story. Explore our Engineering Doctrine and CTO Playbook.